It's Not About the AI — It's About What You Feed It

The response to my first post was overwhelming — and it made one thing clear: people are at very different stages of understanding what’s happening with AI right now. Some of you are building agents and shipping code. Others are just getting started.

So here’s what I’m going to do: I’ll post once or twice a week. Some posts will be advanced — repos, architectures, tools for builders. Others will be simpler — just ways for people to understand how the world has changed and what to actually do about it.

This is a simpler post about the most important concept to grasp right now: Context Management.

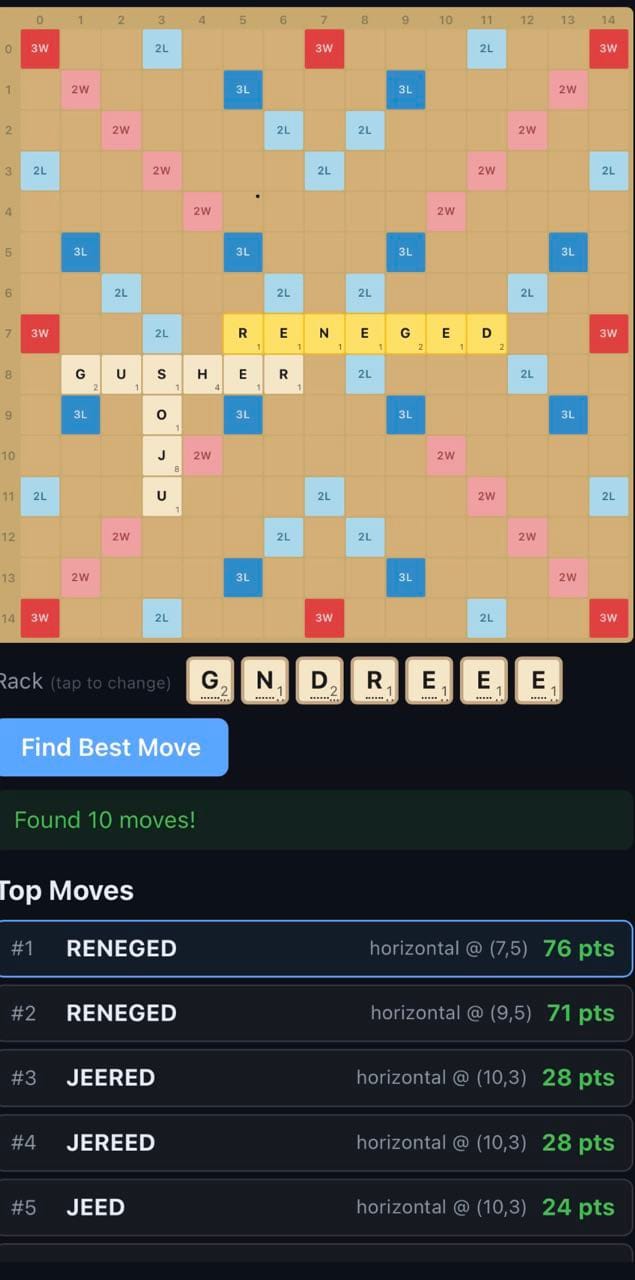

The Scrabble Board

A few weeks ago, a friend was frustrated when ChatGPT failed to solve her Scrabble board. It hallucinated. Moves that didn’t fit. Letters in the wrong place. She was ready to write it off.

But that’s not how it works. I sat down and gave it a plan:

- Here’s a photo of the board

- Scan the board and build a matrix of the letters and tile positions — row by row, left to right, on the 15×15 grid

- Look up the rules of Scrabble. It’s a solved game — find the best known algorithm for move generation

- Build it in Python

- Iterate until the output is correct

- Show me the best move

Same model. Completely different result. It built a working solver in about ten minutes.

The difference wasn’t the AI. It was the context. One approach was “hey, look at this and answer.” The other was “here’s everything you need to know — now go figure it out.”

That’s the whole insight. The skill now isn’t prompting. It’s context management.

OK, So What Does That Mean for Finance?

My first job out of college was at PwC doing international expat tax returns. We were so slammed during busy season that we were just compliance — plugging numbers from one form into another. No time to actually review someone’s full picture, catch what they missed, or advise on what changed.

That mechanical part is now free. And the advisory part — the work we never had time for — you can now do yourself.

It’s tax season. Here’s a relatable example: let’s prep your 2025 personal tax return.

Step 1: Find your 2024 tax return — the full 1040, not just the summary page. If it’s a PDF from TurboTax or your CPA, perfect.

Step 2: Create a folder. Drop in the 1040. Now add everything you’ve gathered for 2025 — W-2s, 1099s, brokerage statements, receipts, whatever’s sitting in your inbox. Throw in a link to the 2025 1040 form and the instructions.

Step 3: Open a terminal, navigate to that folder, and run claude. Then ask:

“Based on my 2024 return, what documents am I still missing for a complete 2025 tax package?”

That’s it. The AI will read your prior return, see every form and schedule you filed, compare it against what you’ve uploaded, and hand you a personalized checklist.

I guarantee it’s probably going to give you a better answer — if prompted well — than an overworked first-year tax associate squeezing you in with 500 returns on April 14th.

Go Deeper

Remember that the AI isn’t just someone filling in your taxes — it’s also a tax expert if you expect it to be.

- “What changed in the tax code between 2024 and 2025 that affects my specific situation?” — not the general changes. Your changes. Did a deduction you took get modified? Did a credit phase out at your income level?

- “What deductions did I take last year that I need to gather documentation for again?” — it’ll flag your charitable donations, home office, whatever you claimed, and remind you to collect the receipts.

- Download your bank statements. Upload those too. Ask: “Parse these — what did I miss? Are there deductible expenses I’m not capturing?” That’s information only you have, and you just gave the AI access to it.

This is the part that matters. The value isn’t Intuit bolting an “AI advisor” onto TurboTax. The value is you giving the AI information that only you have — because you dropped it in the folder.

Where This Goes

This is just the start. Give it more information and tell it where to get it. Build repeatable instructions and skills. Use the command line — Claude Code or Codex — so you can easily get information in and out. Start connecting MCP servers. (More details on all of this in future posts if you don’t know about it yet.)

The Bottom Line

The models are good. They’ve been good for a while. The bottleneck is you — specifically, how much context you’re willing to give them.

So try this example with your taxes. It’s structured, the stakes are real, and you’ll see the difference in twenty minutes.

Then start thinking about what else is sitting in folders on your computer that you’ve never thought to ask an AI about.

Show Me What You’re Building

If you try this — with your taxes or anything else — I want to hear about it. What worked, what didn’t, what surprised you. Reply to this post, DM me on LinkedIn, find me on X.

The people who figure this out first are going to have an enormous advantage. Not because they’re smarter — because they learned to manage context before everyone else did.

Let’s figure it out together.